Computer vision and AI researcher with a Master's from ETH Zürich. I specialize in deep learning for robotic perception, with experience in real-time neural network deployment on embedded GPU platforms. My work spans object detection, SLAM, and 3D reconstruction.

Action required

Problem: The current root path of this site is "baseurl ("_config.yml.

Solution: Please set the

baseurl in _config.yml to "Education

-

ETH ZürichMaster in Robotics, Systems and ControlSep. 2022 - Sep. 2025

ETH ZürichMaster in Robotics, Systems and ControlSep. 2022 - Sep. 2025 -

ETH ZürichBachelor in Electrical Engineering and Information TechnologySep. 2019 - Sep. 2022

ETH ZürichBachelor in Electrical Engineering and Information TechnologySep. 2019 - Sep. 2022

Experience

-

Rheinmetall Air Defense AGDeep Learning Engineer InternFeb. 2024 - Aug. 2024

Rheinmetall Air Defense AGDeep Learning Engineer InternFeb. 2024 - Aug. 2024

Selected Publications (view all )

DiskChunGS: Large-Scale 3D Gaussian SLAM Through Chunk-Based Memory Management

Casimir Feldmann, Maximum Wilder-Smith, Vaishakh Patil, Michael Oechsle, Michael Niemeyer, Keisuke Tateno, Marco Hutter

Recent advances in 3D Gaussian Splatting (3DGS) have demonstrated impressive results for novel view synthesis with real-time rendering capabilities. However, integrating 3DGS with SLAM systems faces a fundamental scalability limitation: methods are constrained by GPU memory capacity, restricting reconstruction to small-scale environments. We present DiskChunGS, a scalable 3DGS SLAM system that overcomes this bottleneck through an out-of-core approach that partitions scenes into spatial chunks and maintains only active regions in GPU memory while storing inactive areas on disk. Our architecture integrates seamlessly with existing SLAM frameworks for pose estimation and loop closure, enabling globally consistent reconstruction at scale. We validate DiskChunGS on indoor scenes (Replica, TUM-RGBD), urban driving scenarios (KITTI), and resource-constrained Nvidia Jetson platforms. Our method uniquely completes all 11 KITTI sequences without memory failures while achieving superior visual quality, demonstrating that algorithmic innovation can overcome the memory constraints that have limited previous 3DGS SLAM methods.

NeRFmentation: Improving Monocular Depth Estimation with NeRF-based Data Augmentation

Casimir Feldmann*, Niall Siegenheim*, Nikolas Hars, Lovro Rabuzin, Mert Ertugrul, Luca Wolfart, Marc Pollefeys, Zuria Bauer, Martin R. Oswald (* equal contribution)

SyntheticData4CV 2024 (Workshop at ECCV) 2024

The capabilities of monocular depth estimation (MDE) models are limited by the availability of sufficient and diverse datasets. In the case of MDE models for autonomous driving, this issue is exacerbated by the linearity of the captured data trajectories. We propose a NeRF-based data augmentation pipeline to introduce synthetic data with more diverse viewing directions into training datasets and demonstrate the benefits of our approach to model performance and robustness. Our data augmentation pipeline, which we call NeRFmentation, trains NeRFs on each scene in a dataset, filters out subpar NeRFs based on relevant metrics, and uses them to generate synthetic RGB-D images captured from new viewing directions. In this work, we apply our technique in conjunction with three state-of-the-art MDE architectures on the popular autonomous driving dataset, KITTI, augmenting its training set of the Eigen split. We evaluate the resulting performance gain on the original test set, a separate popular driving dataset, and our own synthetic test set.

Towards Robust and Efficient On-board Mapping for Autonomous Miniaturized UAVs

Tommaso Polonelli, Casimir Feldmann, Vlad Niculescu, Hanna Müller, Michele Magno, Luca Benini

2023 9th International Workshop on Advances in Sensors and Interfaces (IWASI) 2023

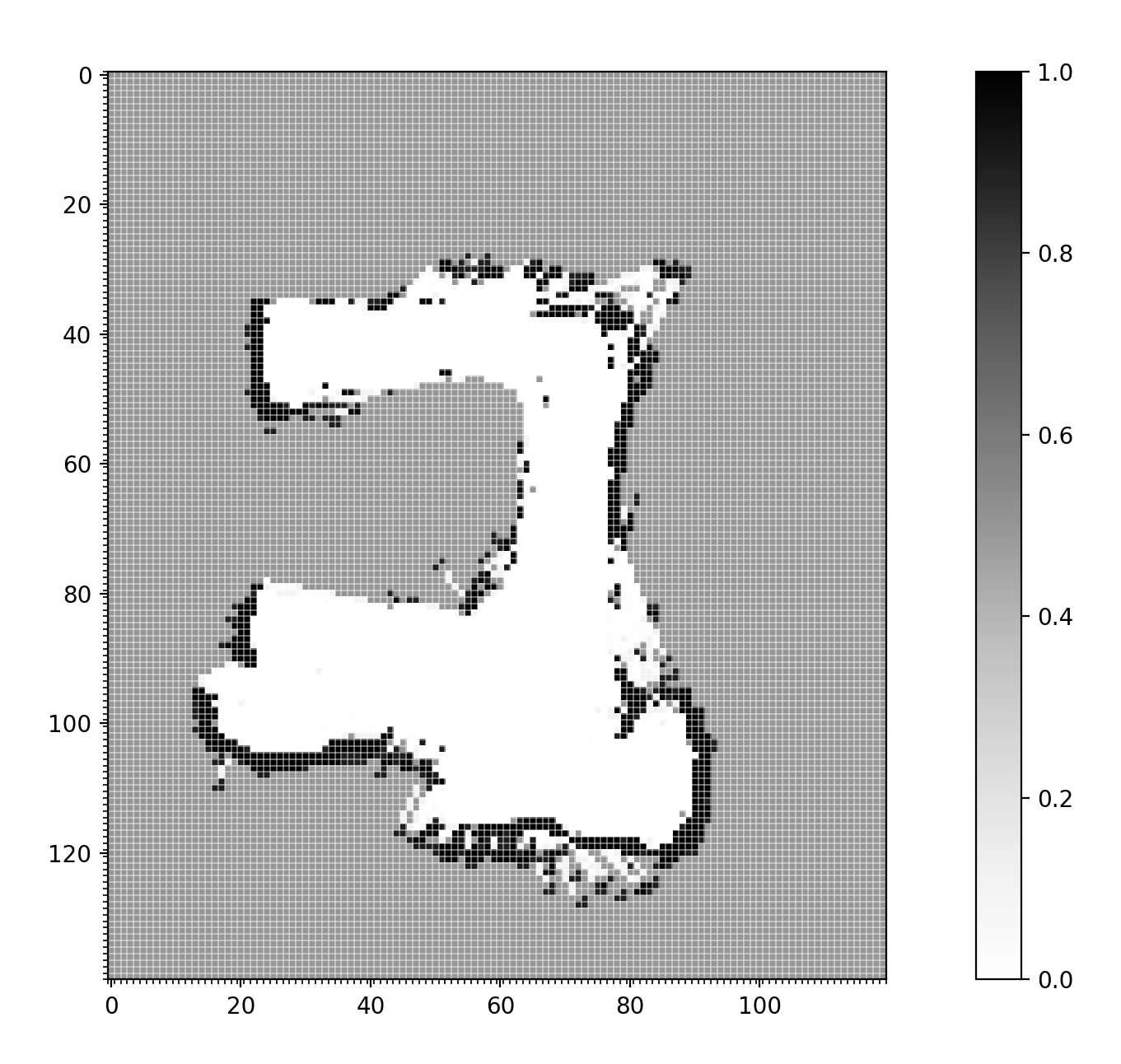

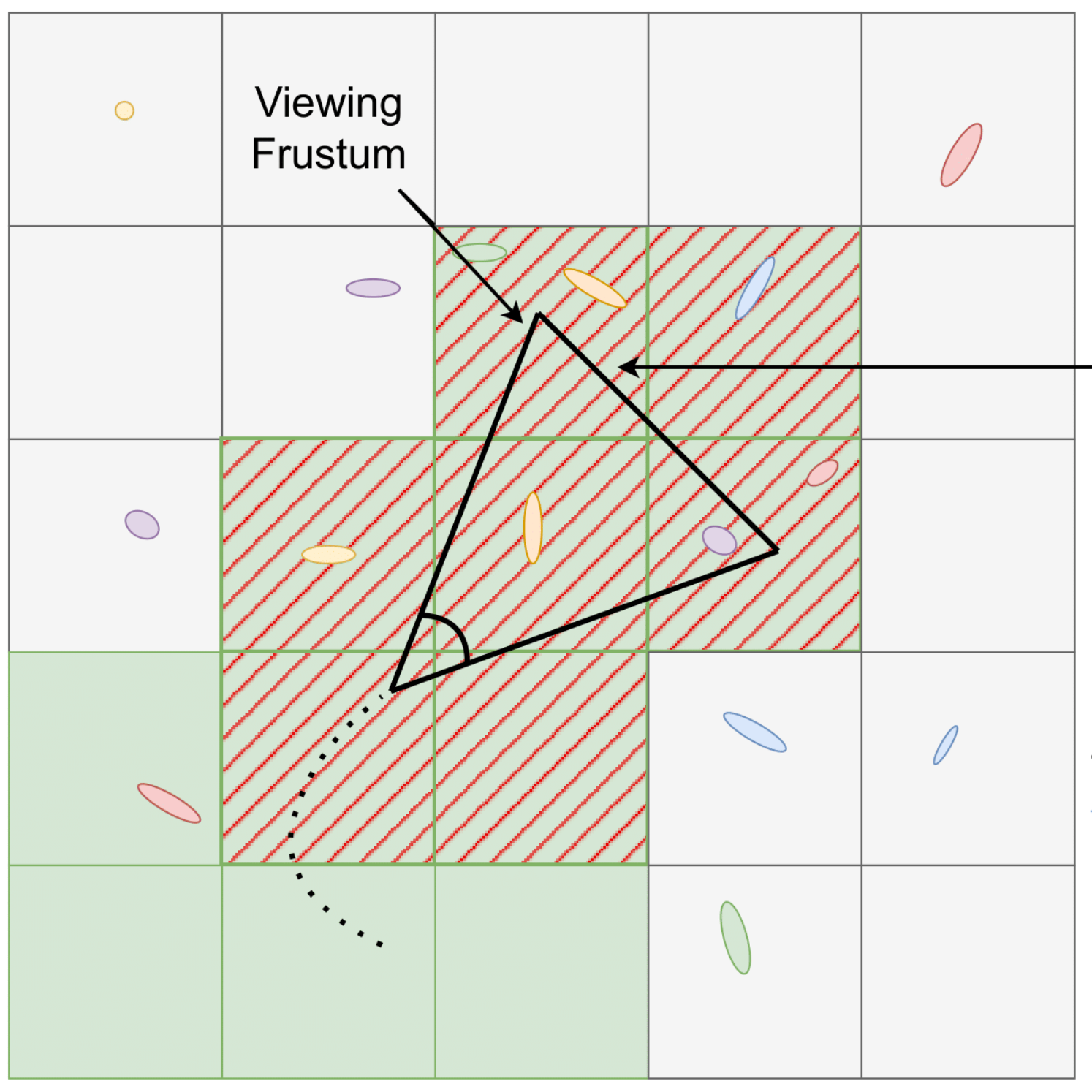

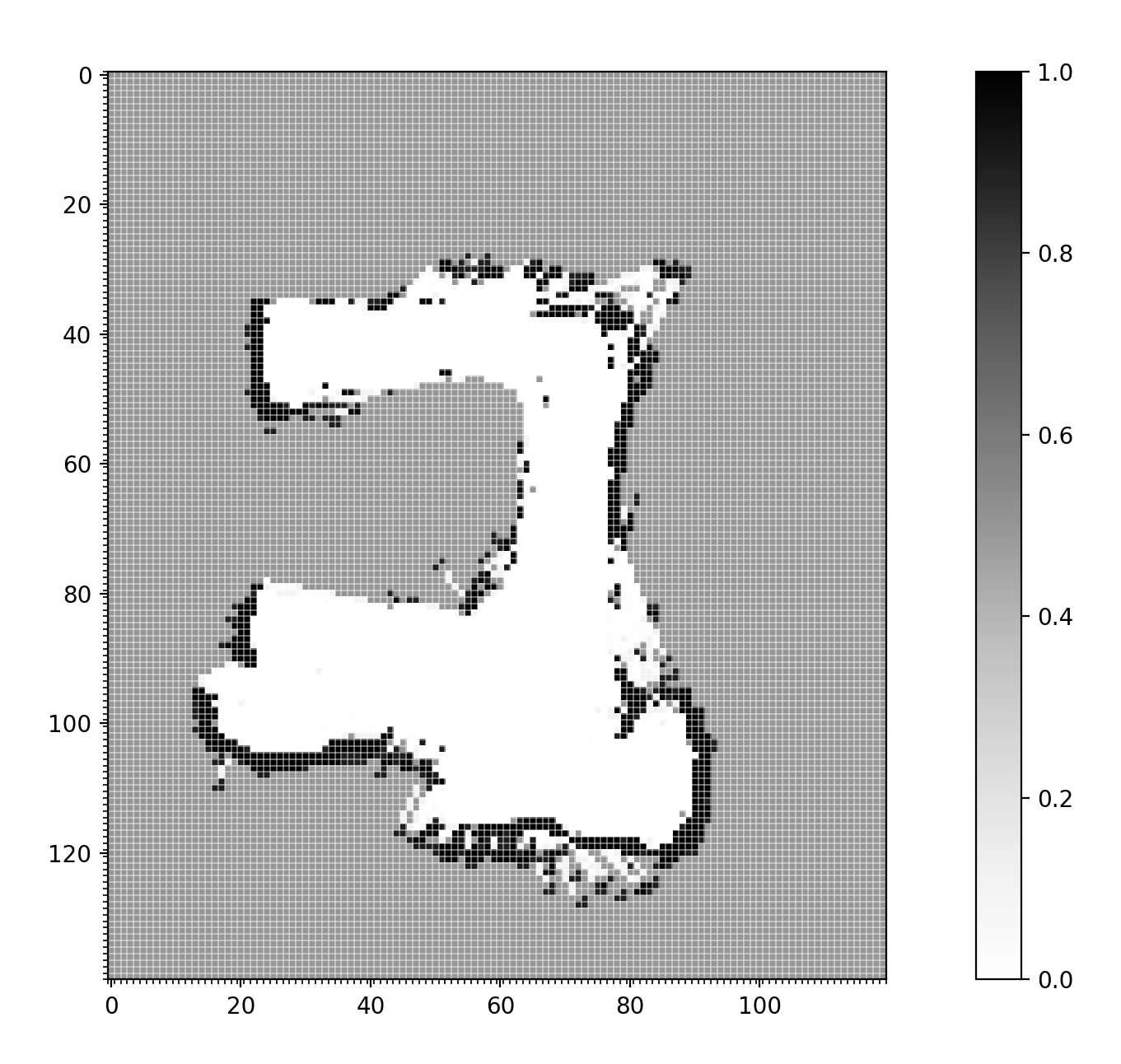

The demand for autonomous nano-sized Unmanned Aerial Vehicles (UAVs) has risen due to their small size and agility, allowing for flight in cluttered indoor environments. However, their small size also significantly limits the payload as well as the battery size and computational resources. Especially the scarcity of memory poses a significant obstacle to generating high-resolution occupancy maps. This work presents an on-board 2-dimensional occupancy mapping system for centimeter-scale UAVs using a miniature 64-zone Time of Flight sensor. Experimental evaluations on the Crazyflie 2.1 nano-UAV have demonstrated that produced maps feature a resolution of 10 cm at mapping velocities up to 1.5 m/s, while covering an area of maximum 400 m2 .